Reliable Synthetic Data Generation

Reliable Synthetic Data Generation

As we’ve seen the rapidly rising impact of LLMs, we’ve also seen the growing importance of “synthetic data,” generated instructional raw text used to train LLMs for specific tasks without the need to mine from real human conversations.

Synthetic data has been successfully used many times to improve the performance of neural networks. Some examples include self-driving cars, which are trained on synthetic data alongside real-world data, and object detection models, which improve performance by training on synthetic datasets generated by GANs (generative adversarial networks).

The names for these techniques continue to evolve and change, but, regardless, all of them are a form of “data augmentation.” Data augmentation is not a new concept. It has been applied and battle-tested thoroughly in every field of machine learning. We can leverage data augmentation for any modality in various forms. The same is true for LLMs. So, why the sudden buzz around synthetic datasets?

Motivation for leveraging synthetic datasets

Large language models are typically trained on web-scale datasets. Given that these models scale well with the size of the dataset, there is no reason to believe that we have achieved their peak potential performance.

The general perception is that LLMs have essentially consumed all of the data on the internet. This is far from indicating that LLMs have reached their peak performance, however. We must consider the potential means of acquisition of quality data. It has been shown that quality data leads to better models with far smaller parameter sizes.

One way to overcome this blocker—that is, the inherent finite nature of data available on the web—is to leverage synthetic datasets for further training. People have leveraged synthetic datasets to train smaller models that perform on par with bigger models at certain tasks. The Phi series is a good example.

Quality matters, even for synthetic datasets.

Consistently, it’s true that the higher the quality of a dataset, the better the performance of the model trained on it. The same concept applies to synthetic data. We see the benefits of using a synthetic dataset only if it is meaningful and of good quality. Phi-3 models are a testimony of this.

What can go wrong in synthetic dataset generation?

Noticeable in today’s research papers is the common mention of leveraging synthetic datasets to improve model performance, but hardly any descriptions of the components of the related synthetic datasets’ generation pipelines.

Generating a reliable synthetic dataset is a complex process. One needs to be aware of the pitfalls of this process. Using a noisy dataset to generate synthetic data can lead to a few silent failures.

Let us take an example to understand the above point a bit better. Let us say we want to build a closed-domain Question-Answering system. We have a dataset (context, question -> answer) that we can use to fine-tune a language model. Each sample consists of multiple paragraphs and is noisy (contains missing figures, unnecessary Unicode characters, and other formatting issues). Our dataset consists of exactly one pair of question-answer for a given context. A synthetic dataset here means an additional pair of generated question-answers for the same context.

LLMs today are incredibly powerful, and leveraging them to generate synthetic data is a natural choice. We can utilize GPT-4 or Llama2-70B for the task defined above. We will pass a context as an input prompt to a large language model and ask it to generate a pair of question-answers relevant to the given context. So, what are the possible pitfalls one can run into in this process? Let us take a look at a few of them:

Hallucinations

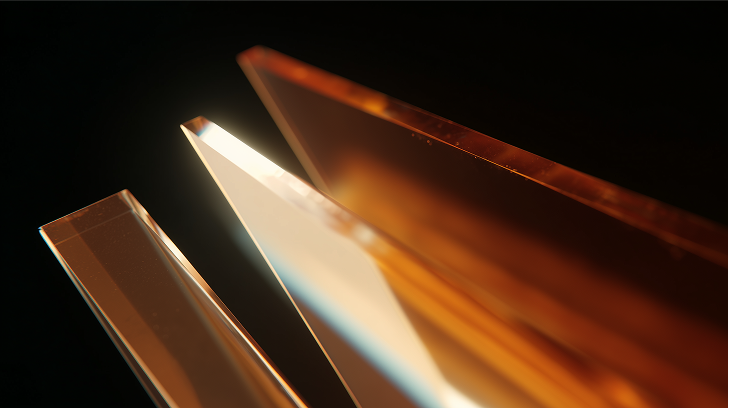

The image shown below depicts a non-trivial type of hallucination. Although the generated text is from the input context, it contains a lot of garbage content. For example, the generated answer consists of a chunk of gibberish present in the input context.

Complexity of the synthetic dataset

After a certain point, easy (low-complexity generated) examples provide diminishing returns in terms of performance. Hence, we want to incorporate more complex examples for better performance gains. The definition of complex or hard examples is use-case dependent; an example of a complex question-answer is one where the answer to a question consists of more than a few words. For example, look at the figure below and think about choosing one from the two generated samples based on the complexity of the generated text. Though both the generated samples are good, the second is more complex than the first one.

.png)

.png)

Incomplete or missing text

If we are using a hosted model (like GPT-4) via an API, then running into issues like unstructured outputs or outputs with disorderly fields is not uncommon. Look at the figure below and notice the missing generated question. Debugging this behavior is not straightforward, as factors like bad inputs, broken tokenization, lack of constraints, hallucination, etc., could be inducing this behavior.

.png)

There are many other nuances to this process that are use-case dependent. That is why generating synthetic datasets in an automated fashion is not a solved problem. An automated synthetic dataset generation pipeline is helpful only if it's positively known to be reliable.

There are several ways to address these problems. Either you can use tools like Instructor, DSPy, Langchain, etc., to solve these issues, or depending on the use case, you can write a few checks that are a part of your data generation pipeline. Which should one choose? We will talk about this in detail in the FAQ section later. First, let us see how to address the issues listed above for the current use case.

The art of generating synthetic datasets

Before jumping on the solution, let us contemplate the possible fixes to address these issues. Here are a few of them:

- Bigger or better model: Is the model capable enough to perform well on this task? Will a bigger model produce better data?

- Prompt optimization: Are the instructions in the prompt not good enough? Should we optimize the prompt? Can techniques like Chain Of Thought (CoT), or Tree of Thought (ToT) help refine the generated text?

- Constraints on generation: Can constraints (e.g. no links allowed, generated text should contain more than a few words, etc.) help the model generate structured outputs? If yes, should the constraints be applied on the prompt level or the text generation level?

These are some good experiments for figuring out the optimized data generation pipeline. A combination of such considerations is powerful enough to generate synthetic datasets reliably.

Data generation requires an extensive feedback loop

Semi-supervised dataset annotation is common across computer vision tasks where the collected dataset is first (partially) annotated by a computer vision model, then human annotators refine those annotations in the final pass. It is a kind of external intervention in the data annotation pipeline.

Inspired by the above, we can have feedback loops within the data generation mechanism that help to generate fine-grained reliable synthetic datasets. We can either have a human in the loop here or put a bigger LLM (bigger than the one currently used for generating the dataset) as a verifier in this loop. In many cases, a human in the loop is necessary for verification and should not be replaced by an automated system. The task we have on hand is a much simpler one, and an LLM can provide the feedback instead. This is similar in principle to the Chain-of-Verification and other similar techniques.

So, what kind of feedback mechanisms can we implement for the question-answer generation task? Ensuring correctness is equivalent to passing unit tests. Here are a few examples:

- Null check: In one of the examples in the above section, we noticed that the generated text was empty. Hence, one of the checks can be for a missing value for a particular field. If any data field (the generated question or the generated answer) is empty, we should regenerate.

- Faithfulness check: The LLM tasked to generate the dataset can hallucinate in multiple forms. Faithfulness check ensures the generated text does not contain facts outside the context provided in the input.

- Answerable check: What if the LLM generates a question that is from the input context, but it can not be answered from it? We would like to generate a question-answer pair where the question is answerable using the context.

These are a few checks we can implement in the feedback loop. We can implement the feedback loop as a standalone py file and integrate it in the dataset generation pipeline, but for the sake of demonstration, we will use DSPy here to implement the same.

Step 1: Define your inputs-outputs

Here we are inheriting the Signature class to tell the LM what it needs to do. In our case, the LM is tasked to generate a pair of question-answer from a given context. Hence, the context is defined as the input field, while question and answer are defined as the output fields.

Step 2: Define your assessment

Why do we need assessments? Remember the explicit feedback loop we talked about in the previous section? The Assess class provides a common interface for doing assessments on the fly. Assessments are presented as a series of questions passed to a particular LLM that responds with a boolean value of yes or no as the answer to a specific question.

Step 3: Main module with assessment feedback loop

We have defined our main question-answer generator and the evaluator (assessment checks). Now, we need to integrate these pieces into a single module. Let's do that. Here is a simple way to achieve this in DSPy:

Let us break down what is happening in the forward pass of the GenerateQAWithAssertions module.

- First, we sample a data point (context) from our dataframe.

- We pass this selected context to the LLM responsible for generating a pair of question-answer using this context. We are using chain-of-thought for the

GenerateQAmodule to generate the question-answer pair. We use GPT-3.5 as the generator LLM. - Once we have the response from the QA generator, we enter the feedback loop where the generated question-answer pair is evaluated for the type of assessments we are interested in for this particular task. Here, we use the

dspy.Suggestmodule for evaluation. If an assessment fails, it retries to generate a response until the assessment has been passed or a maximum number of retries have been attempted. - The first two assessments are null checks and they ensure that the generated question or the generated answer field is not empty. These checks don't require an LLM for correctness check and are implemented as simple Python functions.

- For other checks, we use GPT-4 to check if the generated question is answerable from the given context, if the generated question or the generated answer contains any garbage characters, and if the generated question is from the provided context and contains no hallucinated content.

- Once all the assessments are passed, we return the generated question-answer pair and the original context as an object of the

dspy.Predictclass.

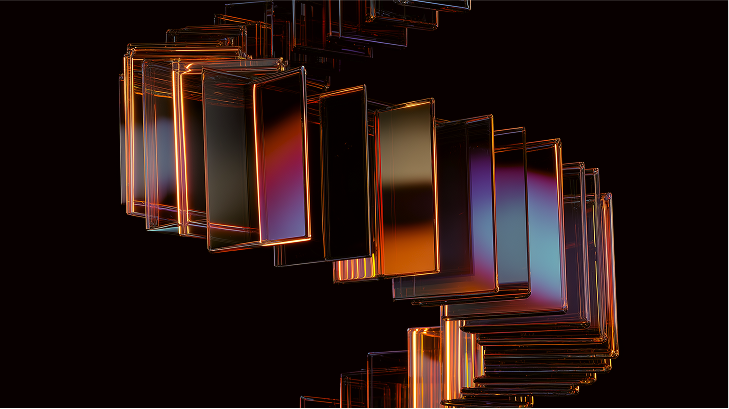

This is how we generate synthetic data reliably. There are chances that we will not be able to generate data corresponding to each point. But we can surely expect to increase the size of our dataset by almost double using this approach. We can then fine-tune any model suited for this task on top of this dataset (a mixture of original plus synthetic datasets). Let us take a look at some examples and compare the approach of generating data with assertions and without assertions:

.png)

FAQs

- Can I use third-party tools or custom scripts to generate data?

It depends. If you are ready to learn to use a new tool, and can use that code in production, that it may be a good idea to use tools like DSPy or LangChain. On the other hand, there is nothing special about these tools. You can achieve the same task by writing your custom modules and scripts. It also gives you a lot of space for optimization in production. The only downside is that writing these modules and testing them on a wide variety of use cases takes much more time.

- Does this eliminate the need of human-in-the-loop entirely?

Again, it depends. For example, if you want to ensure that the generated text is of high-quality, and is free from any kind of toxicity, then you may still want to have a human in the loop for that acts a final filter for your dataset. In some scenarios, it is possible to get away with these checks using a certain metric that you can use as a signal for keeping or dropping a generated sample.

- What are some practical applications of this approach?

A self-improving LLM is one of the best applications of this. Let us take an example to understand this in more detail. Suppose you are fine-tuning some LM on some task (say question-answering). Once the fine-tuning is finished, the system checks the generated report containing all the relevant metric, and the metrics fall short on the value that is desired for this task. At this point, there are two options to improve the performance of the model:- Tuning the available hyper parameters to achieve the desired performance.

- Collect more data, and retrain to see how far can you go before tuning the hyper parameters

Here the second option is much cheaper. With the method described above, we can extend the size of our dataset and try boosting the performance of the model before running a randomized grid-search for automatic hyper-parameters tuning.

References

- DSPy: Programming—not prompting—Foundation Models

- DSPy tutorials from the OSS community

- Large Language Models Can Self-Improve

- Toward Self-Improvement of LLMs via Imagination, Searching, and Criticizing

- Self Rewarding Language Models

- GPT-4

More From the Journal

Trust by Design: Our UX Principles for Building Trustable Data Agents

Agentic AI for Enterprise Needs Much More than LLMs