Ensuring Safe and Respectful Online Spaces: A Look at AI-Based Text Moderation

With the explosion of user-generated content, moderating digital conversations has become critical in keeping online platforms welcoming and respectful. Across the AI community, a variety of techniques—from detailed multi-attribute scoring to constitution-based frameworks—are being explored to address the complexities of filtering out harmful or off-topic material.

At Emergence, we’ve been experimenting with two specific approaches:

General Text Moderation, which tags content across multiple dimensions such as “Derogatory,” “Insult,” or “Toxic.”

Child Text Moderation, which uses a “constitution” (or set of rules) to align content with specific age groups—elementary, middle, or high school.

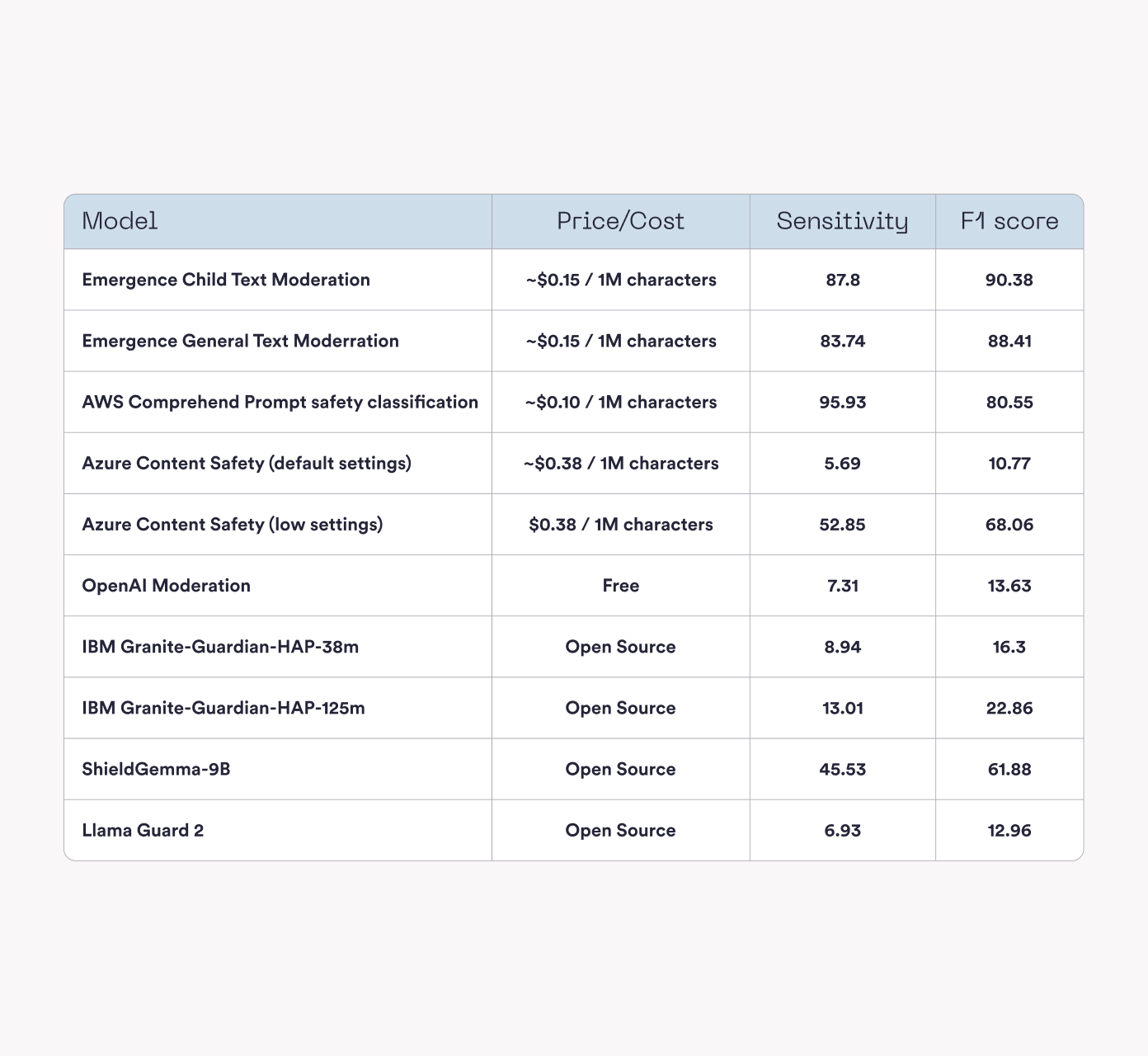

Recently, we ran several popular models head-to-head, comparing sensitivity (the ability to catch problematic content) and F1 score (how well the model balances pinpointing issues with avoiding false positives). The table below highlights some results from our testing:

Overall, different models showcase different strengths. Some options favor high sensitivity (flagging abroad range of content), and others aim to achieve a moderate balance of precision and recall (F1 score). Our own experiments with multi-attribute and constitution-based moderation reflect the variety of ways AI can help address the complex task of policing online content.